2024

"Push-That-There"

Tabletop Multi-robot Object Manipulation via Multimodal `Object-level Instruction

Keru Wang, Zhu Wang, Ken Nakagaki, Ken Perlin

About

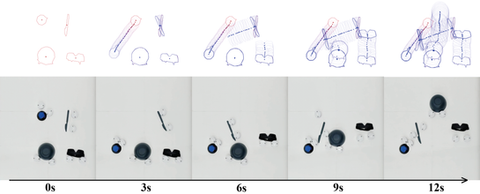

We present ``Push-That-There'', an interaction method and system enabling multimodel object-level user interaction with multi-robot system to autonomously and collectively manipulate objects on tabletop surfaces, inspired by ``Put-That-There''. Rather than requiring users to instruct individual robots, users directly specify how they want the objects to be moved, and the system responds by autonomously moving objects via our generalizable multi-robot control algorithm. The system is combined with various user instruction modalities, including gestures, GUI, tangible manipulation, and speech, allowing users to intuitively create object-level instruction. We outline a design space, highlight interaction design opportunities facilitated by ``Push-That-There'', and provide an evaluation to assess our system's technical capabilities. While other recent HCI research has studied interaction using multi-robot system (e.g. Swarm UIs), our contribution is in the design and technical implementation of intuitive object-level interaction for multi-robot system that allows users to work at a high level, rather than needing to focus on the movements of individual robots.

*This work was done in collaboration with NYU, Future Reality Lab